To be honest, I don’t really know how and what to write about Power Platform anymore. I mean, of course, Power Platform is still there, I still do work in that space, I see a lot of benefits to having the ability to call all those connectors, I still think Power Apps make it easier to develop applications… but I just don’t see Power Platform as a holy grail of fast development anymore. And, besides, it’s never been my tool of choice for “personal projects” development.

These days, though, one can use Claude or ChatGPT to go from a concept to a product in almost no time without having to even do that much of development work at all. I have not decided, yet, if that’s more exciting or if that’s, actually, depressing; but it is the reality.

I’ve done it a few times in the last two years with different personal projects, the quality of coding agents has improved quite a bit during this time. Two years ago I might still have doubts, but, right now, I think if we do want to talk about whether “AI coding agents are going to change the way developers works”, it’s not even the question we need to be asking. That ship has sailed. We should only be asking “how” the work is going to change.

The main caveat to all these discussions, it seems, is that AI usage relies on some naturally scarce resources. And, also (partially because of that), you can’t rely on the AI to properly follow all the best practices you may need to follow in your particular situation.

As far as resources go, context window is still what really breaks it in the end. Sure AI agents will apply tricks to summarize the discussion every now and then, but, in the process, they will lose some context, and, out of a sudden, they’ll need to re-read the code, for instance, or will suggest ideas that have already beed looked into.

You can probably work around such issues by compartmentalizing (did I write it correctly??) development to focus on certain areas per session so that the context window does not become flooded with unrelated concepts.

You may also need to decide on the best models to use – some of them are faster, some are slower, some are cheaper, some are more expensive. Since there are usage costs – more advanced models will cost more to use, but you don’t always need those more advanced models.

As for the best practices and the quality of your product in general, that’s somewhat related to the memory/cost issues as well, but, ultimately, the problem is that AI does not solve “product quality” issues. It can solve them on a limited scale, but it’s similar to how human developers can easily introduce regression issues, or miss certain requirements, or simply misunderstand what needs to be done. Even purely from the technical perspective, an AI agent that has a context window of “X” tokens can only keep in mind that much when developing code. Everything else is simply not going to be considered.

So, you still need QA, you still need security and architecture reviews, you still need someone to know how that code works. Yet, funny enough, humans are, often, more efficient in providing some of the resources AI agents are unable to provide in the same quantaties. As in, a human developer/architect can keep a lot of best practices/architectural decisions/business requirements in their mind without having too load/unload “readme” files (even though we do often rely on “notes”, too). That said, how quickly can human developers access that information? AI agents can do it in a heartbeat, and it takes way longer for us to do the same, so, I guess, if those overall AI limitations get resolved somehow, AI will simply take over. But that’s a big “if” for now.

Still, in the context of the smaller projects, perhaps even those which may feel semi-personal, there is a lot one can do with AI, and it can really be done fast. I am not talking about AI assistance in script development, I’m talking about the full cycle:

- You can fine-tune your idea with ChatGPT. Yes, perhaps some of us would be biased against doing that. As in, what can AI tell us that we don’t know of that’s not relatively obvious – it’s all coming from the training data, right? And, fundamentally, all those LLM models can’t really “invent” something. However, you should try if you have not tried yet. The level of clarity and the speed at which you can get there simply by discussing something with an LLM-based AI is hard to imagine/explain. You just need to try for yourself.

- You can prototype in hours or days. You can even take it further and come up with something that’s way more than a prototype in just a week or two. Keep in mind those caveats above, though. You either have to get involved and not only guide the AI agent, you also have to understand what’s happening, how are you going to deploy the thing, whether it’s all consistent still, etc. Another alternative might be to greately increase your budgjet, get a huge content windows, and allow AI agent to go wild. I personally think I prefer to maintain some control of what’s going on there, so my choice is obvious.

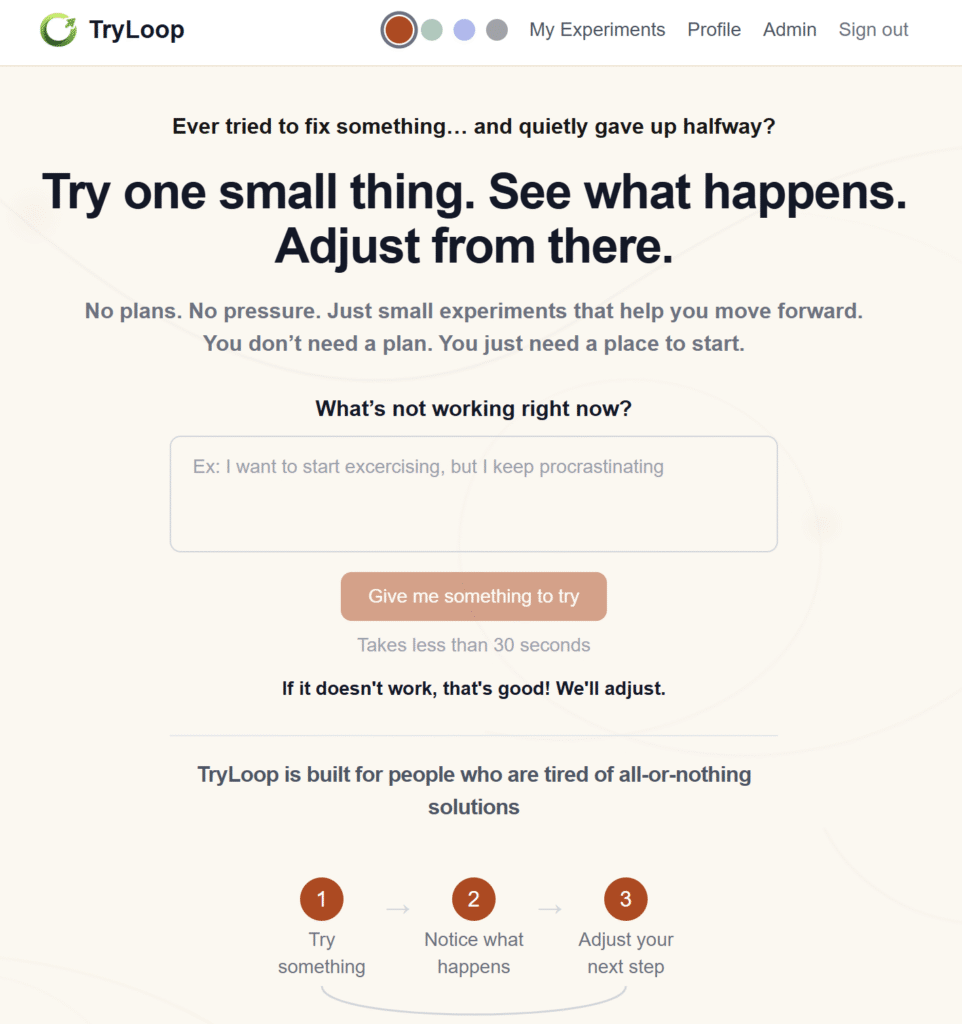

What you get as a result depends. However, perhaps as an example, have a look at this: Try Loop

In a nutshell, it’s a very specialized tool that will suggest simple actions one can try to start moving towards solving a particular problem. Yes, it is AI-based, though it is also human-controlled, so it’s kind of a blended approach there. I did not have to blow through my usual Claude “PRO” plan budget to get this developed, it took me a couple of weeks, and I did get ChatGPT involved quite a bit to validate, review, and improve the overall idea ot the tool.

Anyway – that’s the project. Built with AI, using AI internally, but with human in the loop. It’s all about taking small steps, and launched without a proper plan. Feels appropriate, eh? Try it here, I’d appreciate some feedback. More to come on what worked, what didn’t, and how it was actually built.