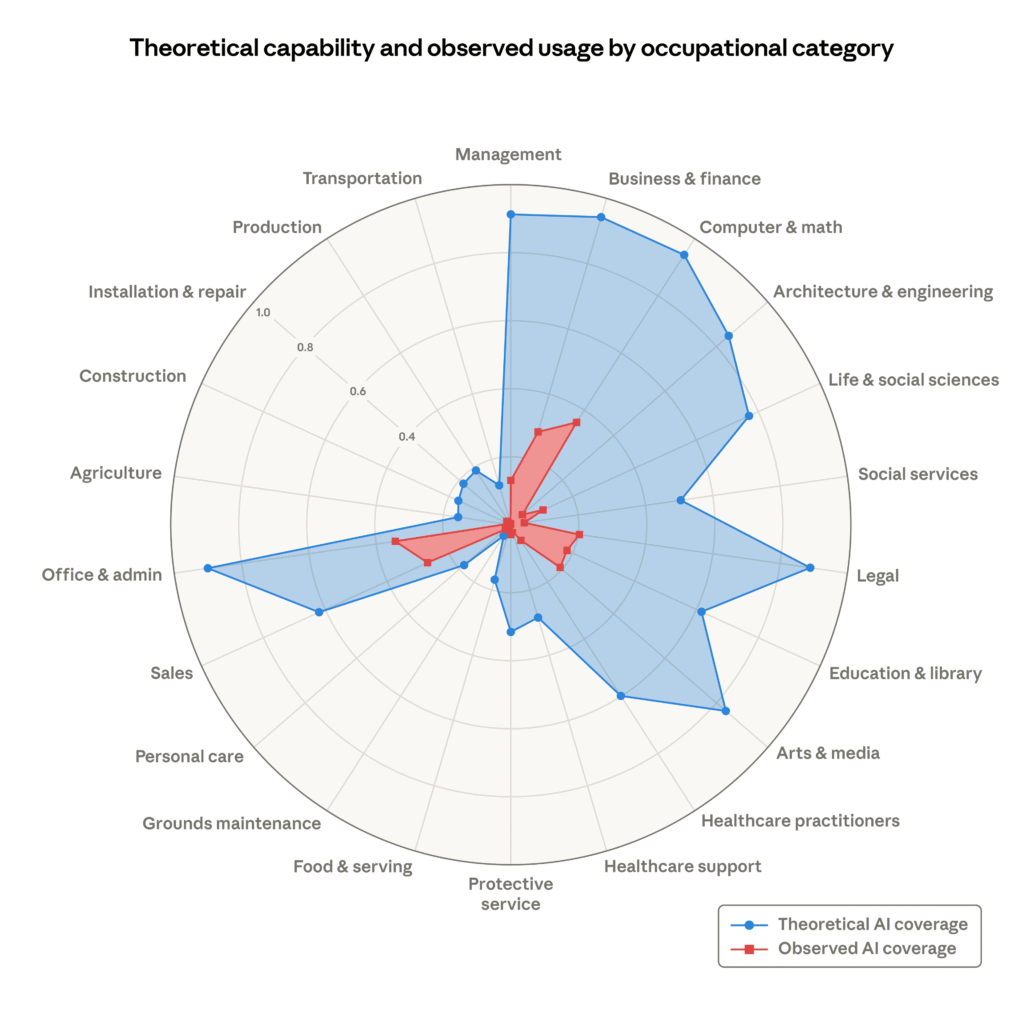

Anthropic published a research paper back in March, and it has a nice diagram of the theoretical vs observed AI exposure by occupational category.

For example, Computer & Math category is highly exposed in theory (AI can do a lot there), but, in practice, AI is underused there still.

I am not going to tell you how to interpret this diagram – there is some methodology behind it, I am not even sure I fully understand all assumptions, but, intuitively, it makes sense. Any intellectual work is highly exposed in theory, but, for now, perhaps it’s not yet “there” in practice. Yet any “physical” work is much less exposed to the AI as we know it today – we’d probably need to equip AI with some robotic extensions first.

Looking at this diagram and being in the IT, I can probably continue panicking on my spare time, since my occupation is extremely exposed, but I don’t want to be doing it alone, so have a look:

Source: https://www.anthropic.com/research/labor-market-impacts

Now you may say that asking AI some questions and sharing AI answers is not creative, but I would disagree. It does not matter who or what provides those answers/opinions, what matters is whether those opinions are sound, challenging, possibly entertaining, etc.

So I had a discussion with Claude earlier this week on a very related topic, and what stuck with me is the conclusion: there may not be a clean answer equivalent to “learn to code in 2005.” The more likely reality is a period of genuine disruption where some people do well and many don’t, and the careers that hold up are ones where the full loop — judgment, relationships, accountability, creativity — matter together, not just one piece. Fields like teaching, healthcare (patient-facing), entrepreneurship, and design-heavy work fit that. But none of them are easy or guaranteed either.

If you are curious, the original question to consider was whether it makes sense for a high school graduate to “learn to code” today (compared to how the answer was almost obvious back in the late 90-s). Part of the answer Claude provided sort of corresponds to what Anthropic mentioned in the research paper above – which is that jobs migth be following the O-Ring model, in the sense that if a single task can’t be done properly, the whole job falls apart. Which is possibly why AI penetraion in the software development is not that overwhelming, at least not yet.

But the hiring is down, the layoffs are happening periodically, AI coding agents are improving, context windows are growing, so the impact is hard to ignore, and that research paper is hinting that we are not at the lowest point at all, yet.